Hi,

We have setup a Commvault Media Agent to backup VMs using HotAdd transport mode.

The setup works but its average throughput is less than ideal.

The below is the setup of the Media Agent

- Directly FC connected to a LTO-8 tape library with 4 FC drives

- 16 CPU cores

- 32 GB memory

With the same above SPEC in our Netbackup environment, it can achieve an average throughput of 300MB/Sec, or 1TB/Hour.

However, this Commvault Media Agent could only achieve around 500 GB/Hour, which is only half of what its potential should be.

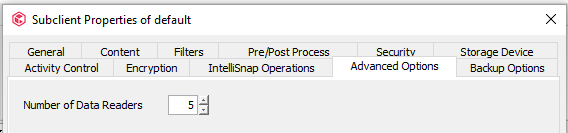

That being said, in Netbackup, we did increase its Number_Data_Buffers and Size_Data_Buffers to maximise its performance, so I’m wondering if there are similar parameters in Commvault that I can tweak to make it run faster ?

Thanks,

Kelvin

Best answer by Kelvin

View original