Hi Fellas,

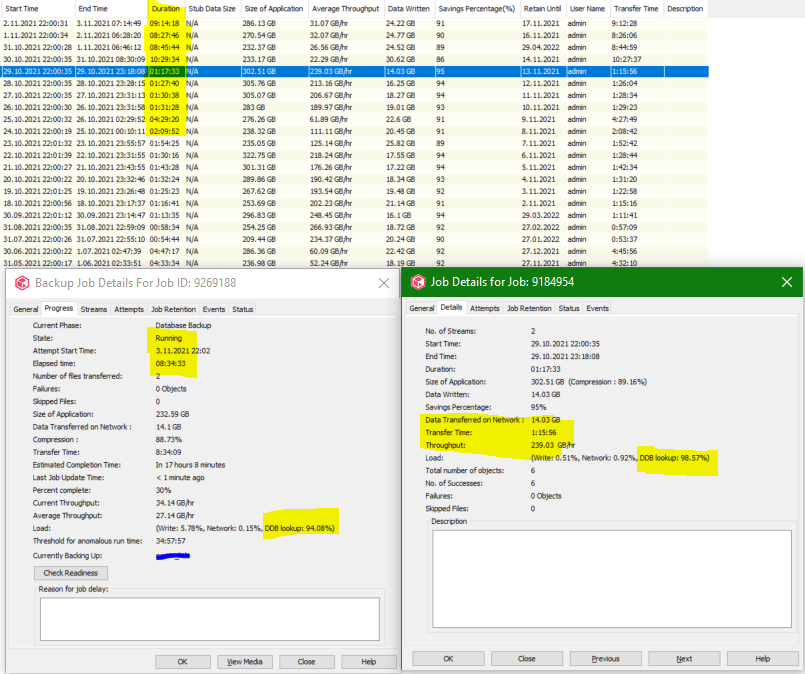

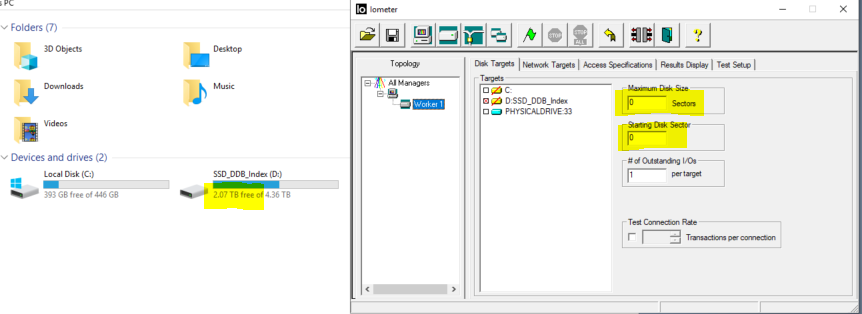

How should I interpret it according to the screenshot below, do you think Job is wasting time in DDB lookup?

Best answer by Mike Struening RETIRED

View originalHi Fellas,

How should I interpret it according to the screenshot below, do you think Job is wasting time in DDB lookup?

Best answer by Mike Struening RETIRED

View originalHi

I would definitely interpret those figures that a large proportion of that time is spent with DDB look ups.

However, there might be many reasons why that task is taking time and we would need more background info to understand.

DDB responsiveness can be caused by many different factors, to give an idea:

These are a few things to consider, let me know if these questions help identify any potential issues.

Thanks,

Stuart

Hi Stuart,

Acctually, I really have strong MA, Local SSD for the DDB and Index Cache and also 40gb nw connection between MA and client.

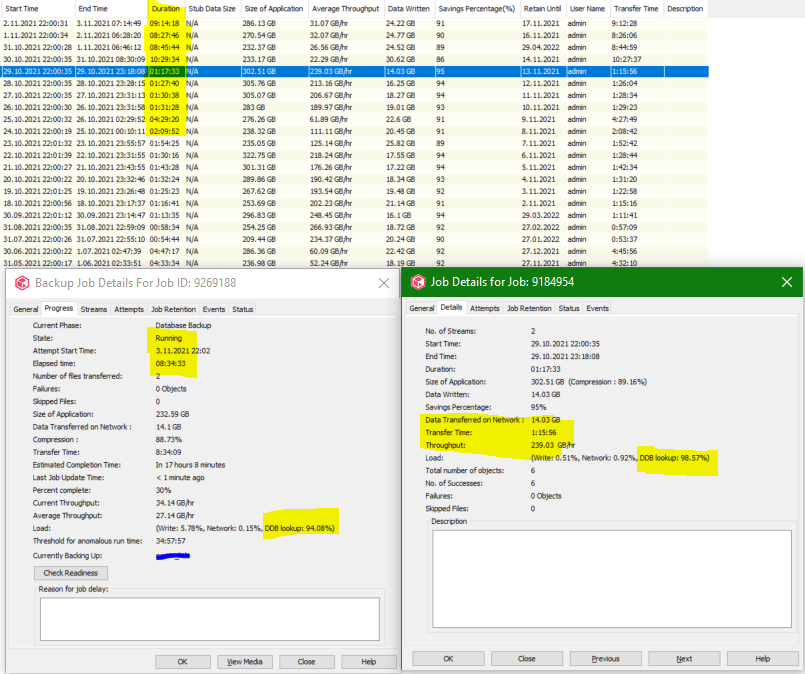

I suspect to the DDB Store. As you can see there are 3 SIDB and the backups are writing to the SIDB: 78 which has poor Q%I time.

The questions here:

1- Commvault created the new SIDB but why the backups are not moved automatically to the new SIDB ?

2- I manually moved my backups with the workflow(Move Clients To New DDB) from SIDB:78 to SIDB: 86. But why it is not moved to the SIDB:94

Hi

Those SIDB IDs aren’t clear from the screenshot provided, I can only see the job history and 2 highlighted job details.

We would need more info to make any assertions on DDB behaviour.

If your MA spec and DDB configuration is good, then we would need to look and understand why Q&I times are poor, then additionally why moving clients to a new DDB hasn’t worked as expected.

Thanks,

Stuart

sorry I forget to the add screenshot. here is it:

Overall, you have a large store (focusing on 78 which is our main offender) which is rather old (not that age alone is a factor).

Dedupe performance is almost always down to the disks thermselves, otherwise it’s scale.

Have you run iometer to eliminate the disks as an option?

Definitely worth considering.

Also worth checking out the dedupe hardware reqs:

Based on what I see, you are set for Extra Large with 2 DDB disks, so that’s where I’d start looking (though you know your environment better).

Now if EVERYTHING checks out, iometer and all, might be worth a case though my impression at this point is either the hardware is not up to the task, or you’re edging up to the limits and need to seal.

As

The only differentiating factor I can see between the 2 new DDBs is Application Size, I would need to check with the teams here on the principles actioned by the Move Clients To New DDB workflow.

The only idea I have right now is that it may selects a new destination DDB for a client based on Application Size already covered by the potential target DDBs with 86 lower than 94, so more open to new clients.

Thanks,

Stuart

Hi

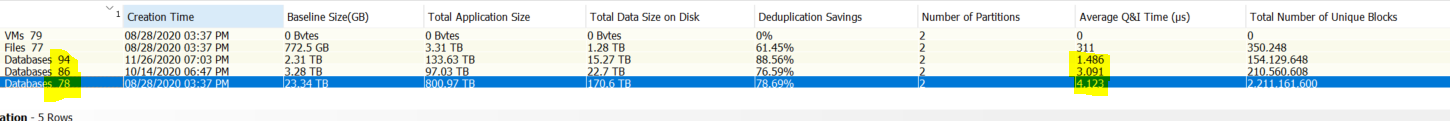

Could you please give me advice question about IOmeter test.

As you can see below screenshot I have 1 SSD disk 4.36TB and contained Commvault binaries, DDB and Index.

I will run IOmeter to see IOPS our SSD but as I mentioned up the disk has data and I don’ t want to lose this.

The procedure below says this “In the Maximum Disk Size and Starting Disk Sector boxes, set the default value to 0”, so I'm not sure I wouldn't lose data?

Good afternoon. Iometer does not overwrite your data or put your data at risk. It is a performance test of the disk. Any writes that need to be written will be written in the 500 GBs of space required to run the test.

If the free space is less than 500 GB, then move the DDB as described in Moving a Deduplication Database

Enter your username or e-mail address. We'll send you an e-mail with instructions to reset your password.