Hi Guys,

I finally found the exact article that describes a solution I wanted to implement and am seeking opinions to do this or not. Basically I want to create multiple Selective Copies under a storage policy and associate with different subclients/computers to meet a client’s tiering model and different retention. Because I also want to implement Deduplication between the Primary and each selective copy, Weekly and Monthly, I’m weighing an option to Create Selective Copy using a Library instead of using a Storage Pool. In the article below;

Article: https://documentation.commvault.com/commvault/v11/article?p=119730.htm

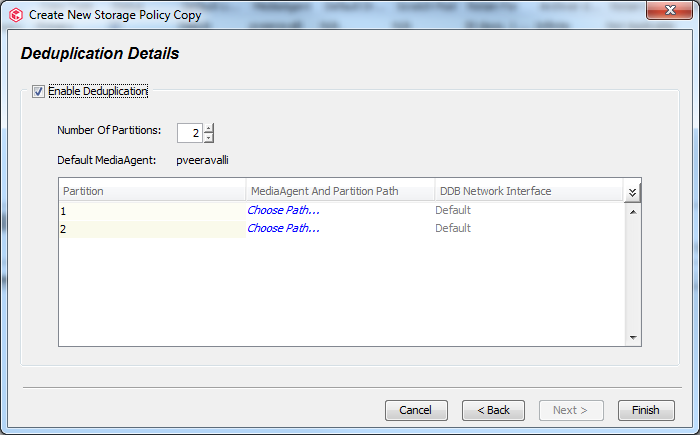

Step 17b, can I select Partition Path as a normal Windows folder e.g. D:\<randomFolder>. If I create additional selective copies under the same storage policy, can I use the same D:\randomFolder> to deduplicate data between Primary Copy and additional selective copies or I have to create a D:\<randomFolder1> and so forth for each copy.

I ask because I do not have enough dedicated Premium SSDs provisioned to create Deduplication Engines. Does this method work and what are the pros and cons?

Best answer by Sean Crifasi

View original