I have one-drive and exchange online backing up to a MA in Azure with blob storage, this all works fine - but I want a 2nd on-prem aux copy. I’m happy with with the network costs, traffic and on-prem disk capacity.

The storage plan uses Cool in the blob, when the aux copy runs, it stop/starts with recalls for each chunk.

Idea 1 - Ideally the aux copy should trigger a workflow like the restore job to recall the chunks all in 1 go, can this be enabled or something in the dev pipeline?

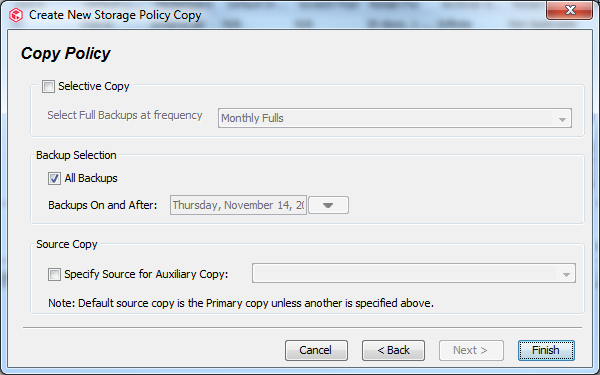

Idea 2 - create a new extra ‘Primary’ with hot storage and 7 days retention, copy this to the existing 6 months Primary (cold) and copy to the on-prem MA

Idea 3 - change the plan to hot, but I would prefer to manage costs

Idea 4 - ?

Cheers

Best answer by chrism4444

View original