Hello,

I need your help ;-)

i was asked to prepare tabled report showing Front End data stored in local library (NetApp).

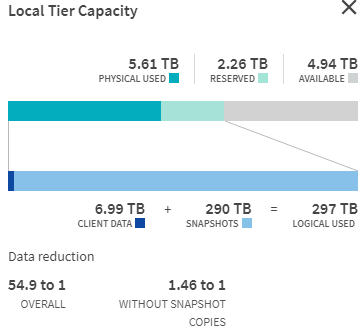

I already explained the difference in logical and physical use, but my management still wants to see the list of everything that will sum-up to 297 TB.

I tried the chargeback report but it's showing me data from Jun last year.

Does anyone know how can I achieve this goal?