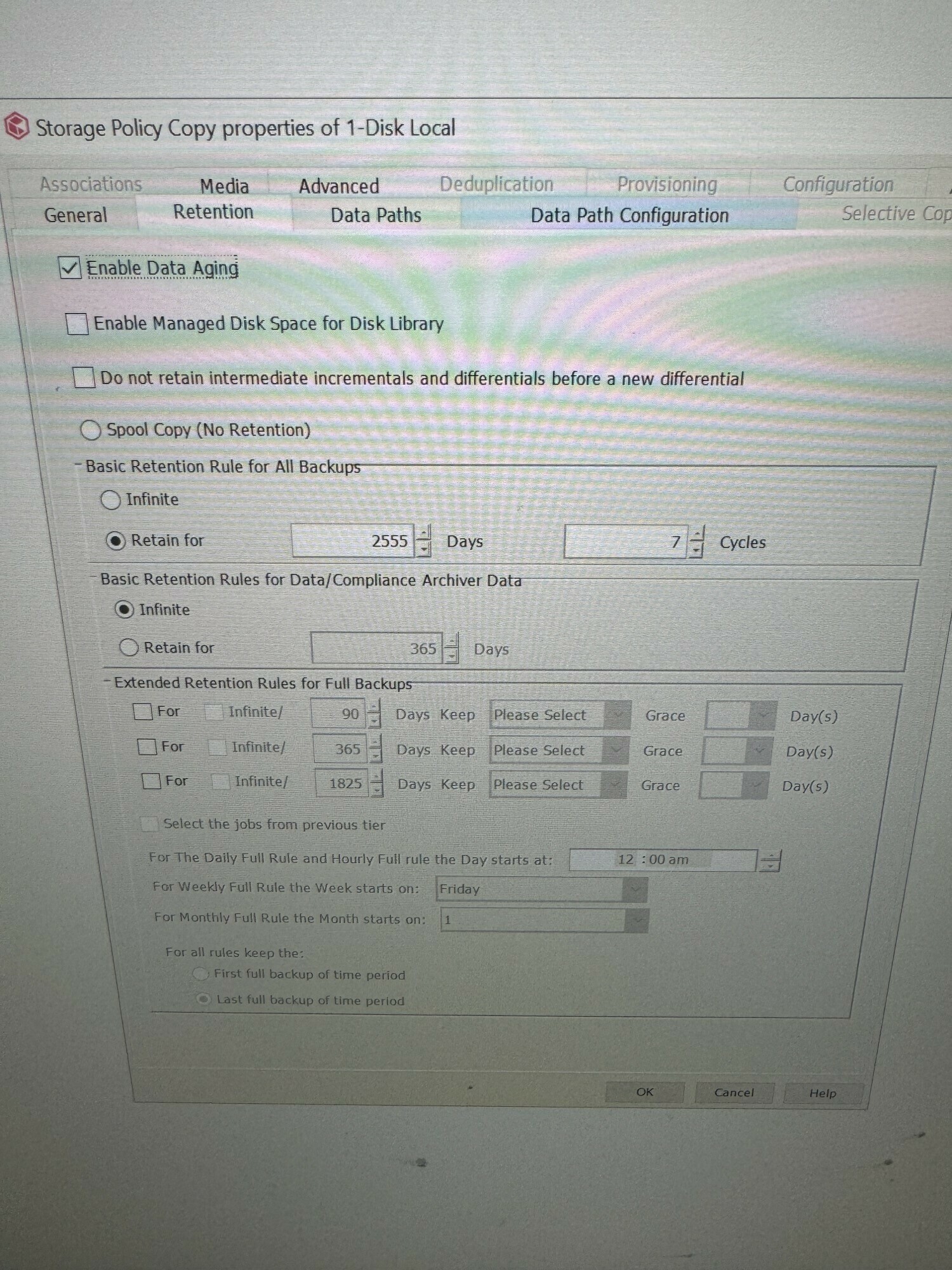

For Archived data produced by the OnePass feature in Commvault what determines the retention time in the Storage Policy Copy?

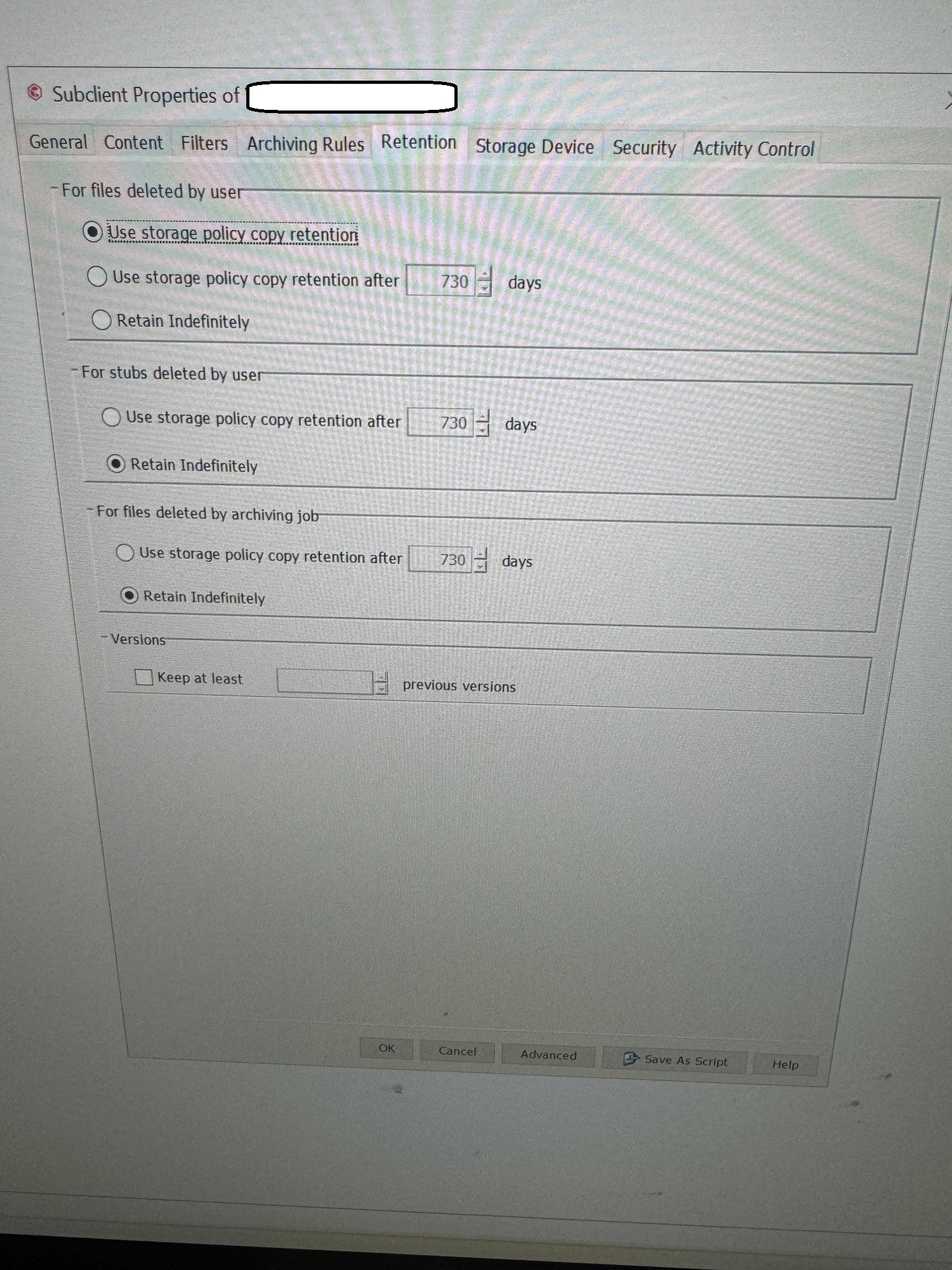

There is a “Basic Retention Rule for All Backups” and a Basic Retention Rules for Data / Compliance Archiver Data. If I’m right the Basic Retention for All Backups setting would dictate retention for “backups” that were referenced by subclients to that storage policy and the latter would just reference data that was archived by OnePass and uses the same storage policy copy? So if the “Basic Retention Rules for Data / Compliance Archiver Data” setting is infinite data created by OnePass will never age out?

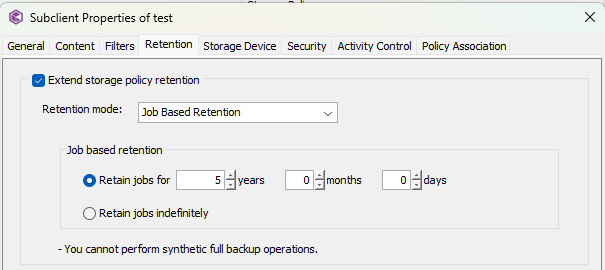

Secondly if the schedule associated with OnePass is configured to do a nightly incremental and never a Full or Synth Full what is the impact of deleting a heap of Incremental Backups in the backup chain? A full was done only the first night this was set up.

I was never intended to retain the Archived files indefinitely and as a result 3 years of unwanted non deduped data has been retained. Will deleting these incrementals immediately after the first full and up to the point we want to retain cause any issues? I’m assuming the associated stub files will remain unless manually deleted?