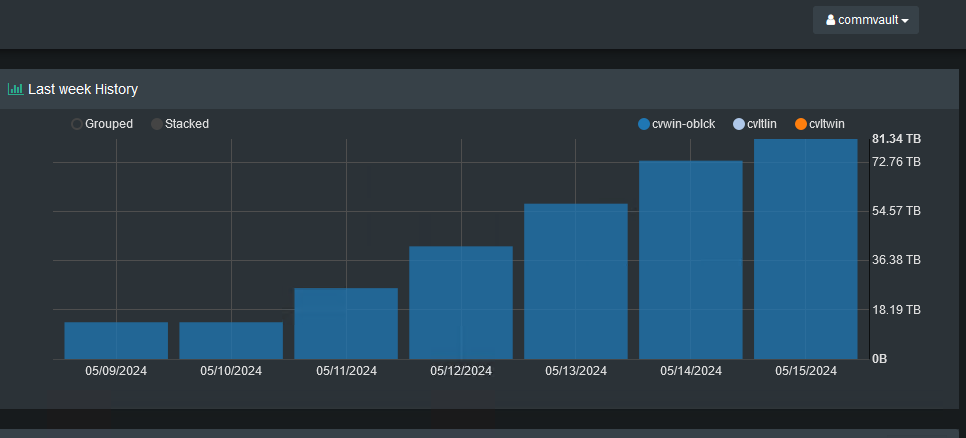

I’ve been discussing a topic with a Commvault user of an issue they are having. They do not use Commvault deduplication for their backups as they are writing to an array with deduplication. They are now looking to create a Secondary Copy of their data to replicate to Scality Ring. However, my concern is they are going to run into the same problem they did when they tested Air Gap Protect - the secondary copy inherits the primary copy’s compression settings (not enabled), so the deduplication performance is not where we would anticipate it to be.

If that’s the case, is there any other feature out there that would allow them to write both a non-deduplicated copy (primary) and a deduplicated copy (secondary) with different compression settings? Documentation makes it look like you can change the settings for a Storage Policy Copy, but I believe it is only referencing the Primary Storage Policy Copy as the top level explicitly states the compression settings for Aux Copies adheres to the Primary Copy’s settings.

Is it possible to have this scripted somehow via Workflow? Or even a possibility to have a Secondary Copy created and the Source Copy set to “Primary Snap” would work as well as the source data is being protected via IntelliSnap.

Any thoughts or insight are greatly appreciated.