Hi Team,

We currently have quite a large Secondary Copy of data, writing to object-based storage and I am just reviewing its health status (note - this is NOT cold storage in any way).

At the moment it looks pretty good, but a while ago I seem to remember it was Commvault’s recommendation to periodically seal these large DDB’s.

I believe this was in order to maintain healthy DDB performance.

Does anyone know if this still applies? The reason I’m asking, is that I so far haven’t seen that recommendation in the documentation, but it is possible it is squirreled away somewhere.

To help assist there is a summary here (approx):-

2 Partitions

Size of DDB 950 GB (each).

Unique Blocks 500 million

Q&I 3 days 1200

Total Application Size 15 PB (yes, PB)

Total Data Size on Disk 550 TB

Dedupe Savings 96.5%

Infinite retention (critical data needed for longterm and potential legal needs).

If I have this right, and based current docco:-

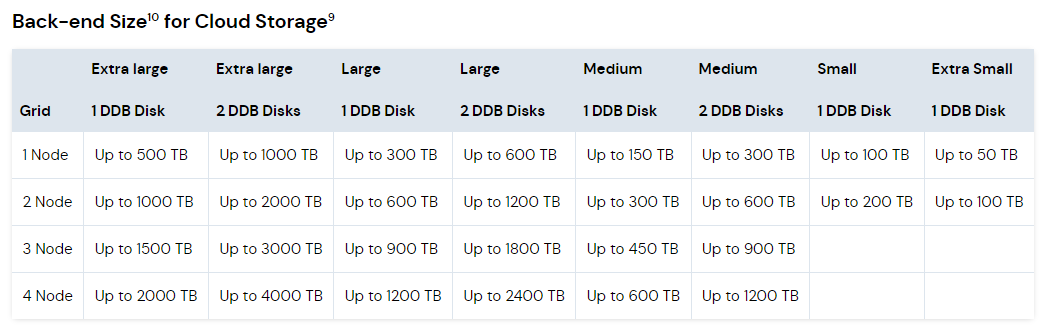

With a two partition, one disk setup (which we have), we can hit 1000TB of physically written BET

So being at 550 we are well within parameters.

However, the question remains about sealing the store.

I am aware that although a Q&I of 1220 is ok, it is steadily creeping up.

Additionally, as we are using Infinite retention, then one day we are obviously going to hit the 1000TB mark.

I would summarize my questions as:-

1 - Should I seal this store to improve DDB performance

2 - Assume that with the underlying SP’s being infinite retention, no data blocks will be impacted or age-out whatsoever.

3 - How do I mitigate reaching that 1000TB BET limit? Do I simply add an extra DDB to each of the current Media Agents, or am I likely to need extra MA’s? Hopefully I can just add another partition to each MA, as looking at the above docco we have scope to go to “Extra Large 2 DDB Disk” mode.

Thanks in advance ….

Best answer by jgeorges

View original