Hello community ,

We are trying to migrate SAN storage to S3 cloud library .

Per suggestions followed these steps .

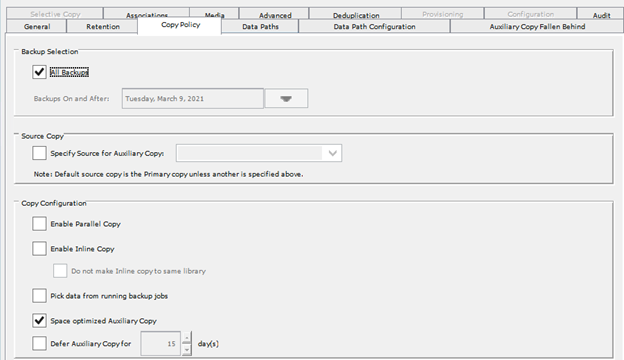

1. configured new global dedupe storage policy using your new S3 bucket and MA

2. configured new secondary copies in your existing storage policies pointing to your new S3 dedupe storage

3. ran aux-copy

We have huge data and contacted commvault support to determine the timelines to know when the aux copy will get complete . Currently aux-copy is running for more than 4 months.

Support has mentioned below points .

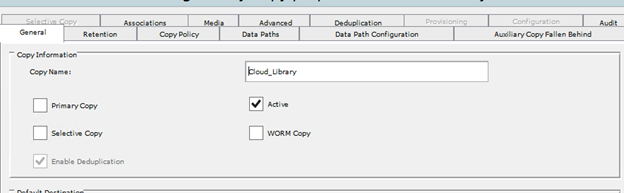

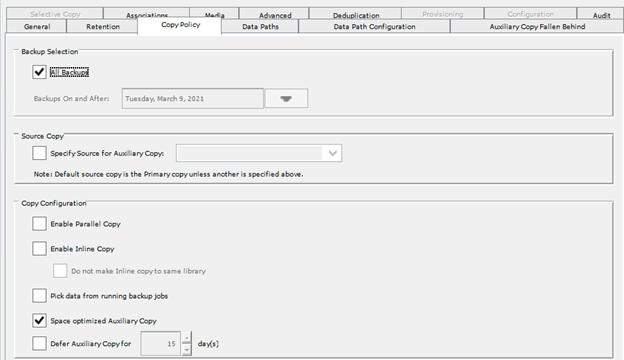

-Your current configuration is allowing the selection and prioritization of new backups over older data

-You are also configured to copy all data to cloud and mentioned we are not using dedupe for aux copy.

How to make sure have optimal Aux copy configurations

Please share your inputs .

Thanks in advance

Spartan9