We’ve been using disk libraries on our offsite DR mediaagent for years now. We only store the last week of changed data in it in case of absolute disaster (we store a year’s worth of changes on our on-prem mediaagent).

Our office just got a large amount of S3-compatible storage and gave us a path to it. I successfully added a new “S3-compatible” cloud library to my commserve and I can see it (a single mount path, vs the 50 mount paths my disk library has, going to each disk).

My goal is to change my existing Storage Policy settings, the policy called “SSCC Auxilary Copy” so that it stops using the disk library, and starts using the Cloud library for all future Aux copies.

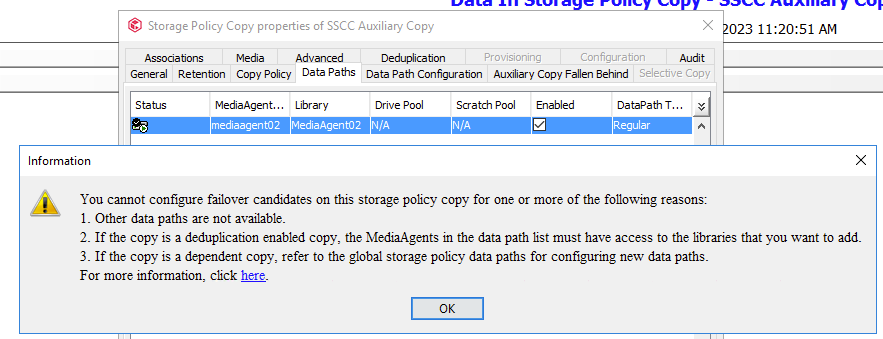

I went into the properties of the Storage Policy and I see the tab “Data Paths” that only contains a single item, with the correct MediaAgent02 listed, and the current disk library, just also named “MediaAgent02” - I think this is what has to be changed. My new cloud library is named “DoIT-S3-Storage”.

But when I click on “add” in this tab, I get the following error. When I click the URL pictured, my browser opens but just directs me to the home page of the commvault essentials site - https://documentation.commvault.com/2022e/essential/index.html - I am already logged into the documentation site anyway.

Is there documentation already written to do what I’m describing? I’m not sure how to proceed next.