IHAC using NetApp storage as Library mount paths, not supporting Sparse Files.

Without Sparse File support, we cannot accurately calculate the usage of mount path data, but this is expected as normal cases.

But this MSP customer, they’re still using BET charging to tenant users (I tried to eliminate this for couple of years but not succeeded yet), requires how much data actually occupied.

It’s simply not easy and impossible to get accurate data, especially under DDB utilization since Commvault is retaining only the stats for non-aged jobs, so quite difficult to accumulate how much is written/purged as of now.

Still we can retrieve rough image of usage from Commvault logs, from DDB Verification job results.

After running DDB Verification, we can get reclaimable stats at a log file named DDBMntPathInfo.log on MA server(s) like (this is actual data at customer's site):

9601 2581 10/31 08:06:34 22551699 ====================================================================

9601 2581 10/31 08:06:34 22551699 TOTAL for all MPs [5], EngId [50], Frag. threshold [40%], Attempt # [1], Datapath MAs # [1]

9601 2581 10/31 08:06:34 22551699 Chunks - Total [12825]

9601 2581 10/31 08:06:34 22551699 Fragmented: >= 20% [ 7871], >= 40% [ 7329]

9601 2581 10/31 08:06:34 22551699 >= 60% [ 6923], >= 80% [ 6454]

9601 2581 10/31 08:06:34 22551699 Sfile containers - Total [1446801]

9601 2581 10/31 08:06:34 22551699 Fragmented: >= 20% [ 100196], >= 40% [ 84386]

9601 2581 10/31 08:06:34 22551699 >= 60% [ 74944], >= 80% [ 63801]

9601 2581 10/31 08:06:34 22551699 Blks Count - Total [430316410], Valid [133419215], PhyRemoved [291591294], Dormant [5305901, 3.82%]

9601 2581 10/31 08:06:34 22551699 Reclaimable: @ 20% [ 5039751, 3.63%], @ 40% [ 4699341, 3.39%]

9601 2581 10/31 08:06:34 22551699 @ 60% [ 4339324, 3.13%], @ 80% [ 3783925, 2.73%]

9601 2581 10/31 08:06:34 22551699 Blks Size (in Bytes) - Total [31593769131630], Valid [10422656366157], PhyRemoved [20571721787482], Dormant [599390977991, 5.44%]

9601 2581 10/31 08:06:34 22551699 Reclaimable: @ 20% [ 571610827524 ( 532.35 GB), 5.19%], @ 40% [ 529661153256 ( 493.29 GB), 4.81%]

9601 2581 10/31 08:06:34 22551699 @ 60% [ 477285420623 ( 444.51 GB), 4.33%], @ 80% [ 397514060948 ( 370.21 GB), 3.61%]

17770 456a 10/31 09:41:35 22552392

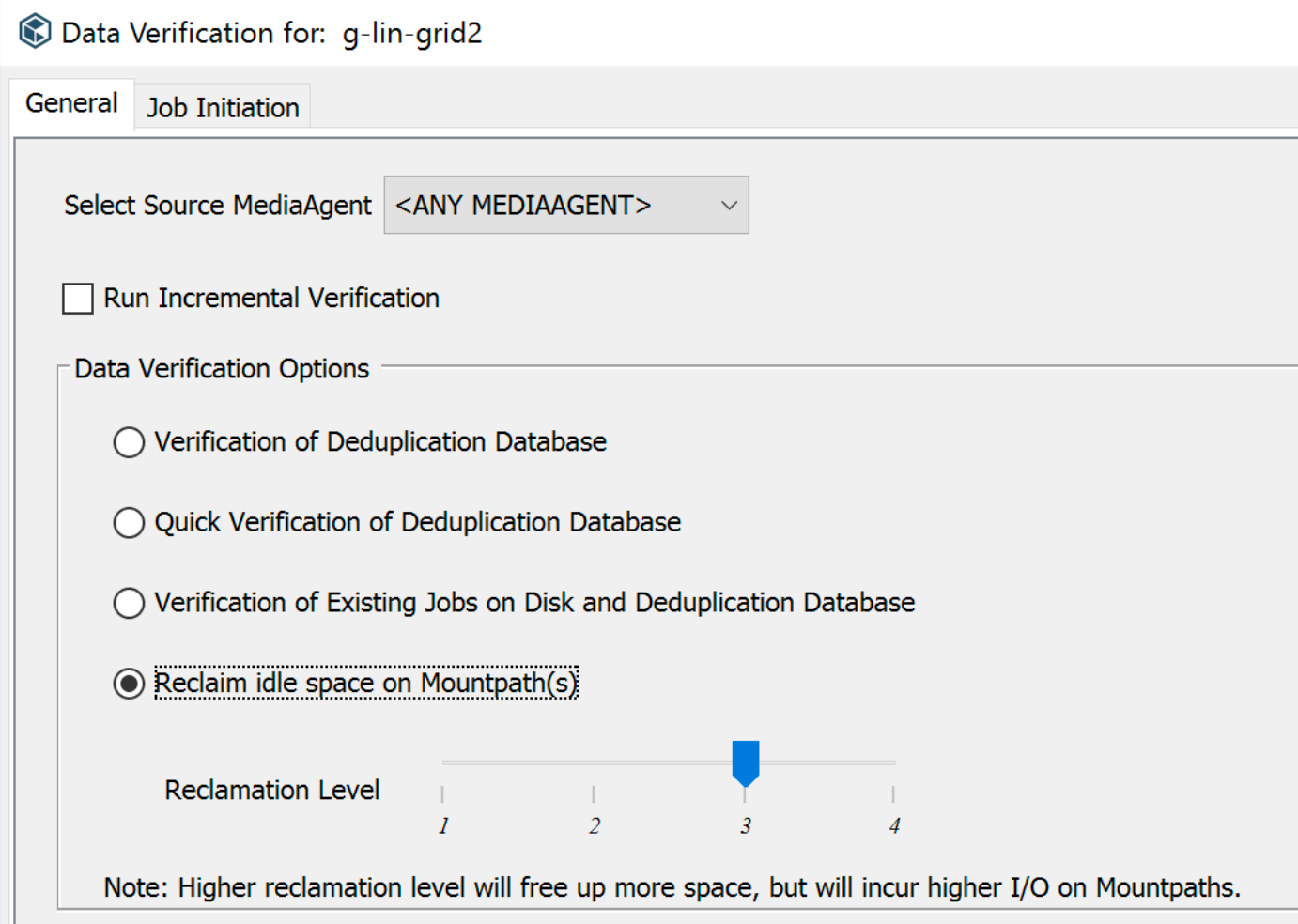

-----------------------------------------------------------From here we can see that this mount path is having some Dormant entries, which can be eliminated by running DDB Verification job again with Reclaim idle space on Mountpath(s):

The expected amount to be freed is depended on the Reclamation Level, smaller the more aggressive, so we can assume the actually used amount of data from here.

To retrieve this information automatically, I introduced a Workflow to run DDB Verification jobs and retrieve this log information automatically, this will run at the end of every month for all DDBs and used for BET charging base.

The logic to retrieve the stats we can use like the following PowerShell logic (on Windows MA, I'm having another for Linux MA):

$jobid = xpath:{/workflow/variables/jobId}

$bc = Select-String -Path ".\DDBMntPathInfo.log" -Pattern "${jobid}.?Blks Count"

$bt = Select-String -Path ".\DDBMntPathInfo.log" -Pattern "${jobid}.?Blks Size"

$rec = Select-String -Path ".\DDBMntPathInfo.log" -Pattern "${jobid}.?Reclaimable.*GB"

$sum = 0

foreach($l in $bc) { if($l -Match "Total \[(?<Total>.*?)\]") { $sum += $matches["Total"] }}

"Block Count (Total) = " + $sum / 2

$sum = 0

foreach($l in $bc) { if($l -Match "Valid \[(?<Valid>.*?)\]") { $sum += $matches["Valid"] }}

"Block Count (Valid) = " + $sum / 2

$sum = 0

foreach($l in $bc) { if($l -Match "PhyRemoved \[(?<PhyRemoved>.*?)\]") { $sum += $matches["PhyRemoved"] }}

"Block Count (PhyRemoved) = " + $sum / 2

$sum = 0

foreach($l in $bc) { if($l -Match "Dormant \[(?<Dormant>.*?),") { $sum += $matches["Dormant"] }}

"Block Count (Dormant) = " + $sum / 2

$sum = 0

foreach($l in $bt) { if($l -Match "Total \[(?<Total>.*?)\]") { $sum += $matches["Total"] }}

"Block Size (Total) = " + $sum / 2

$sum = 0

foreach($l in $bt) { if($l -Match "Valid \[(?<Valid>.*?)\]") { $sum += $matches["Valid"] }}

"Block Size (Valid) = " + $sum / 2

$sum = 0

foreach($l in $bt) { if($l -Match "PhyRemoved \[(?<PhyRemoved>.*?)\]") { $sum += $matches["PhyRemoved"] }}

"Block Size (PhyRemoved) = " + $sum / 2

$sum = 0

foreach($l in $bt) { if($l -Match "Dormant \[(?<Dormant>.*?),") { $sum += $matches["Dormant"] }}

"Block Size (Dormant) = " + $sum / 2

$sum = 0

foreach($l in $rec) { if($l -Match "Reclaimable: \@ 20% \[\s*(?<rec20>.*?)\(") { $sum += $matches["rec20"] }}

"Reclaimable (20%) = " + $sum / 2Something like this technique, we can retrieve Commvault log information to utilize some reporting purpose.

But this is only one-shot and customer-specific work, since there's no fixed format definition for Commvault logs, everytime we apply changes (SP/FR upgrade especially) we need to test all things carefully and modify if required.

Hope this kind of information helps,