Our linux file server is a large VMware VM - 48GB RAM, 8 virtual CPUs that are running on a Intel(R) Xeon(R) Gold 6248R CPU @ 3.00GHz - and it has about 500TB of storage on it, that our linux admin has set up as 12x 60TB (max size) volumes on our SAN, that communicate back to VMware over iSCSI.

We get reasonable performance with our linux users using this linux VM for day to day usage, but certain customers and projects are reaching crazy levels of unstructured file storage. We have one customer that has a folder that consumes 16TB of data, across 41 million files. And while that’s our worst offender, the top 5 projects are all pretty similar.

We’ve been using the Linux File Agent installed into this VM since starting to use Commvault in 2018. We typically see about 3 hours for an incremental backup to run across this file server, with the majority of time just scanning across the entire volume, and the backup phase running relatively quickly. We run 3x incremental backups per day, at 6am, noon, and 6pm.

However, that aforementioned project is starting to change/touch 2 million files per day. The file agent is starting to take 10+ hours to do a backup, and we’re missing a lot of our incrementals and falling behind.

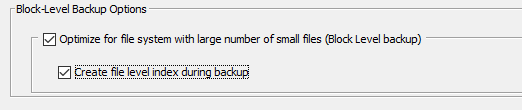

We are wondering what other organizations do with Commvault when their production file servers start getting this large. In 2018 we were using drive striping, so we couldn’t use Intellisnap, but we’ve since stopped doing that. Is that what would be recommended instead, to move to block level backups instead of file? We did use block level backups on our Windows file server (which was only about 90TB of data, not the 500TB of the linux file server) but we found that when we needed to do a Browse action in commvault, it would take 30-60 minutes just to start the browse. I am wondering if a browse across a 500TB block backup would be even worse.

Any suggestions or options for using other components or functions of Commvault to deal with this huge amount of data on a single file server are appreciated.

I figure that in the grand scheme of things 500TB for a file server can’t be that large, right?