Hi!

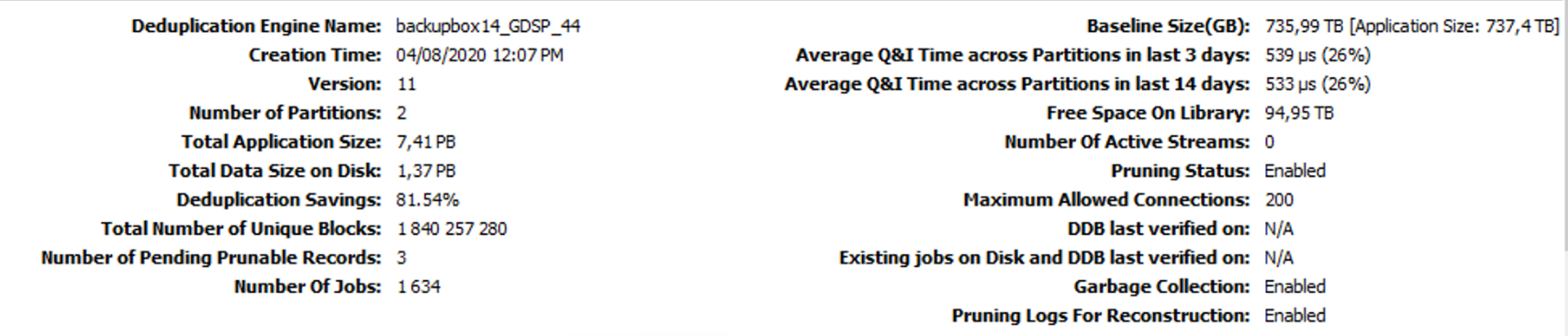

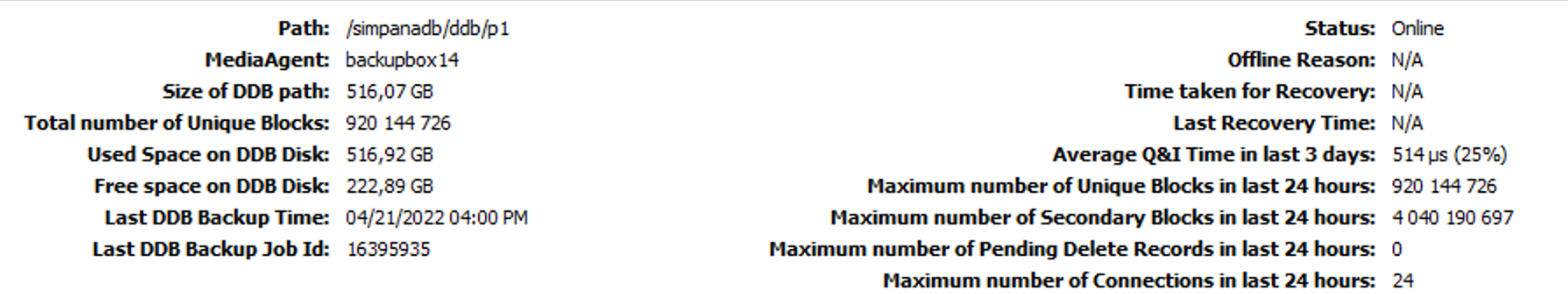

Some time ago we set up Commcell dedicated to Hadoop environment. One media agent, one DDB (two partitions, hosted on local SSDs), deduplication block size increased to 512KB, disk library from few external SAN attached storage arrays.

And it keep growing :) Few incremental jobs a day, writing ~10TB, synthetic full each 10 days. Without any performance issues so far. DDB Q&I ~ 500ns. Both backup and restore time acceptable. It’s kind of archive, not typical backup solution.

Last month I was asked to disable retention, because ongoing migration activities. And it keep growing faster. I assume until 2PB on disk library by the end of year.

So wondering when I’d hit the ceiling for this setup? I know it breaks media agent and deduplication sizing, but until Q&I time are fine everything is acceptable? Or i would hit other limit? It need to survive until end of this year! :)

Some numbers below :

Thanks!