Hi there,

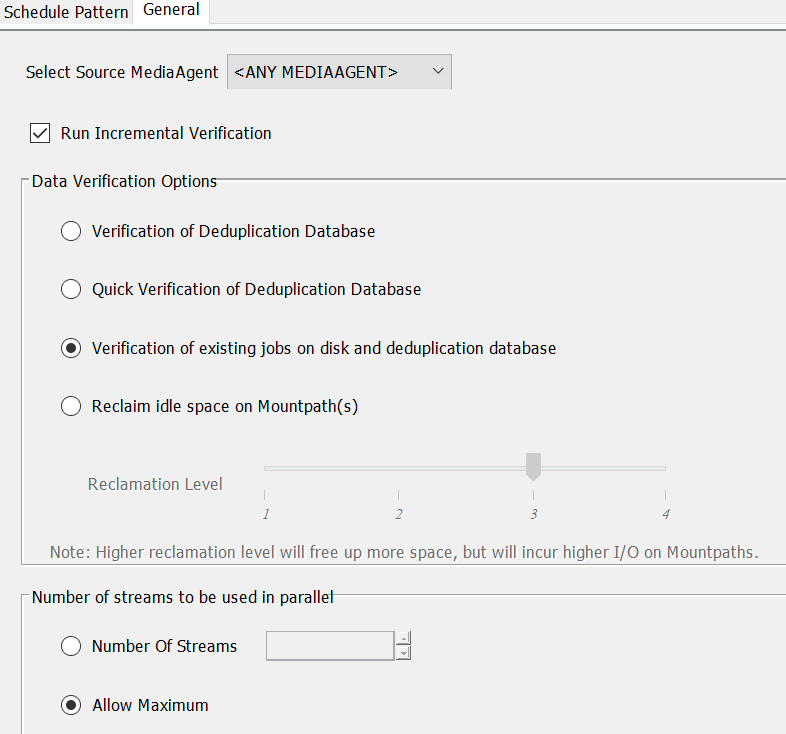

is it safe to limit the number of streams to be used in parallel for System Created DDB Verification schedule policy? I am asking because in our case this verification policy consumes 30 streams out of 30 streams. However, there is set up use maximum number of streams, why is 30 limit then?

Secondly, I would like to discuss Data Verification Options. Which option do you use preferably? Would it be enough to use only Verification of Deduplication Database instead of Verification of existing jobs on disk and deduplication database?