Hello there.

In the last couple of months I was asked by a few customers where all the data growth on their disk libraries came from. And by digging deeper into that topic I tried to understand the data and statistics from the DDBs. I had to realize that some of those statistics seem to be very odd and that I don’t understand them. So maybe someone can enlighten me.

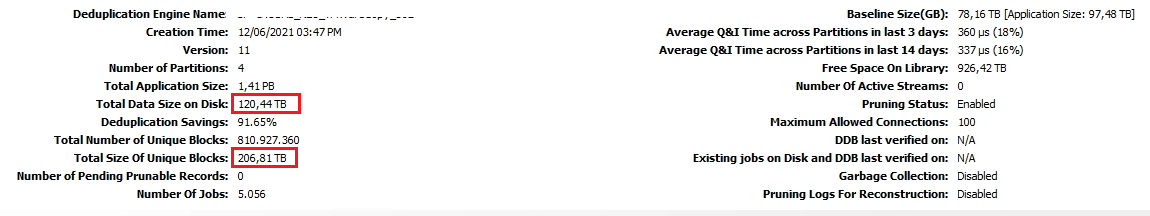

Let’s take a look at a screenshot from statistics of a “normal” DDB:

So what do we got here:

Deduplication Engine Name: self-explanatory

Creation Time: self-explanatory

Version: I just take this as it is but is there anything out there but Version 11? Does it refer to Commvault 11? I thought a recent DDB should be Version 5 (or 4.2 if you prefer) and a somewhat older DDB should be version 4. So what does “11” mean”?

Total Application Size: AFAIK this is the total data that got backed up into this DDB. All fulls, incrementals and so on before compression and dedupe.

Total Data Size on Disk: Seems to be self-explanatory

Deduplication Savings: Simple Math: 1 - (Total Data Size on Disk / Total Application Size)

Total Number of Unique Blocks: Simply the number of the unique blocks. At first I thought that you should be able to multiply this with the block size (typically 128 oder 512 KB) and get the Total Data Size on Disk. But I already learned that data normally get's compressed after signature generation and so it is smaller than your block size. You can read about that here: https://community.commvault.com/commvault-q-a-2/the-difference-in-the-number-of-unique-blocks-in-deduplication-databases-1510

Total Size Of Unique Blocks: Is this before or after compression?

Number of Pending Prunable Records: The records that data aging identified for aging and that still need to be pruned.

Number Of Jobs: self-explanatory

Baseline Size(GB): AFAIK the baseline is the size of one full backup of everything (before compression and dedupe).

What about the Application Size in this row? I would guess this is the size of all fulls + incrementals of the last(?) backup cycle. Correct?

The rest of the statistics is IMO self-explanatory

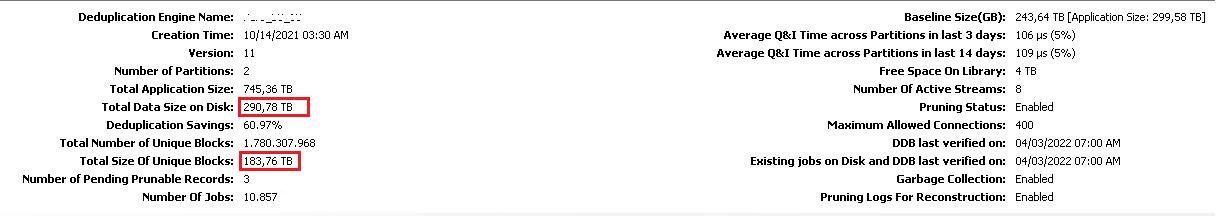

Now let’s look at some statistics that seem to be weird:

In my oppinion we have 2 things that occupy space on the disk library: The unique blocks and some meta data. So the Total Data Size on Disk should be a bit higher than the Total Size Of Unique Blocks, right?

But what happend to the DDB on the former screenshot? Why is the Size on Disk so much higher?

This one is even worse. Why on earth is the Total Data Size on Disk much lower than the Total Size of Unique Blocks? Is this even possible?

That’s it for now. Hopefully somebody can give some insights into this.

Regards

Pasqual