I've seen several discussions regarding this topic. But I still don't get it.

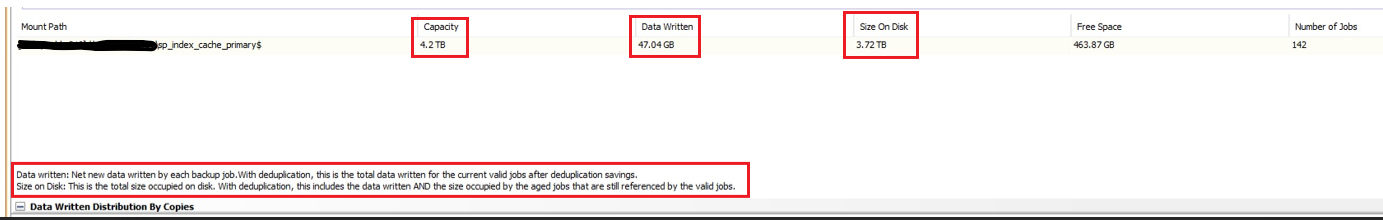

From the docs:

Application Size:

The size of the data that needs to be backed up from a client computer. Application size is not always identical to the size reported by file or database management tools.

Data Written:

New data written on media by each backup job.

Size on Media:

Size of deduplicated data written on the media.

Consider the following real job:

Job 1 (deduped disk lib is the destination):

App Size: 33.4TB

Data Written: 24.93

Size on Media: 7.30

1st question: what is the total size occupied by this job in the dedup disk lib?

2nd question: is data written size, before dedup? Does it account for sw compression only? both?

3rd question: is size on media just the size of unique blocks for the job (disregarding the baseline?)?

regards,

Pedro