Hello,

We are seeing a very large random read load on our Hitachi G350 backup storages with NL-SAS disks. These random reads are completely consuming our backup storage performance. We have two G350s on campus and a third at a remote site. Commvault runs copy jobs between these three G350s.

DDB is on NVMe locally in the Media Agent, also the Index Cache Disk.

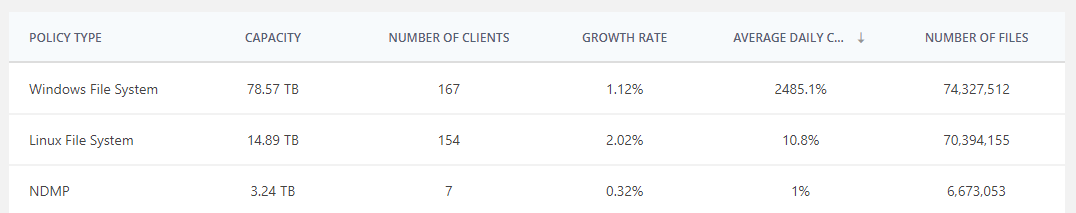

We ran several analyses and Live Optics showed us that the daily change rate is 334.9%, which is mainly due to the Windows File System policy, for which we see 2485.1% daily change rate.

Does anyone know how the random read load could be reduced since our disk backup is otherwise unusable. What steps could we take to optimize the Commvault configuration?

Screenshot:

Thanks for your help!